On Human Predictions with Explanations and Predictions of Machine Learning Models: A Case Study on Deception Detection

Vivian Lai and Chenhao Tan.

In Proceedings of ACM FAT* Conference 2019.

Abstract:

Humans are the final decision makers in critical tasks that involve ethical and legal concerns, ranging from recidivism prediction, to medical diagnosis, to fighting against fake news. Although machine learning models can sometimes achieve impressive performance in these tasks, these tasks are not amenable to full automation. To realize the potential of machine learning for improving human decisions, it is important to understand how assistance from machine

learning models affects human performance and human agency.

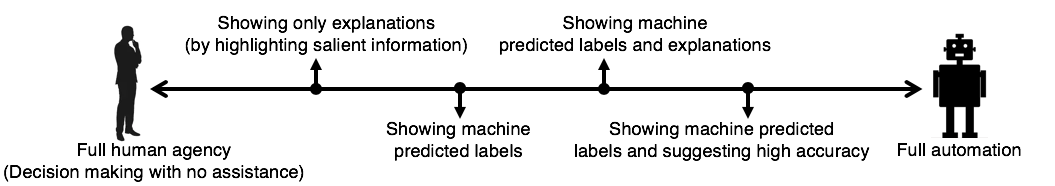

In this paper, we use deception detection as a testbed and investigate how we can harness explanations and predictions of machine learning models to improve human performance while retaining human agency. We propose a spectrum between full human agency and full automation, and develop varying levels of machine assistance along the spectrum that gradually increase the influence of machine predictions. We find that without showing predicted labels, explanations alone slightly improve human performance in the end task. In comparison, human performance is greatly improved by showing predicted labels (>20% relative improvement) and can be further improved by explicitly suggesting strong machine performance. Interestingly, when predicted labels are shown, explanations of machine predictions induce a similar level of accuracy as an explicit statement of strong machine performance. Our results demonstrate a tradeoff between human performance and human agency and show that explanations of machine predictions can moderate this tradeoff.

[PDF] [Slides] [Data & demo] (Note that the demo may differ from the paper as we continutally develop our thinking on human + machine!)

Important update:

If you read the paper before 01/10/19, there is a new version of the paper.

We found a bug in our code and replicated the affected experiments.

If you do not want to re-read the paper, the main change is concerned with this sentence in the abstract:

"explanations alone do not statistically significantly improve human performance in the end task"

-> "explanations alone slightly improve human performance in the end task".

@inproceedings{lai+tan:19,

author = {Vivian Lai and Chenhao Tan},

title = {On Human Predictions with Explanations and Predictions of Machine Learning Models: A Case Study on Deception Detection},

year = {2019},

booktitle = {Proceedings of FAT*}

}