Explaining Why: How Instructions and User Interfaces Impact Annotator Rationales When Labeling Text Data

Jamar L. Sullivan, Will Brackenbury, Andrew McNutt, Kevin Bryson, Kwam Byll, Yuxin Chen, Michael Littman, Chenhao Tan, Blase Ur

In Proceedings of NAACL 2022.

Abstract:

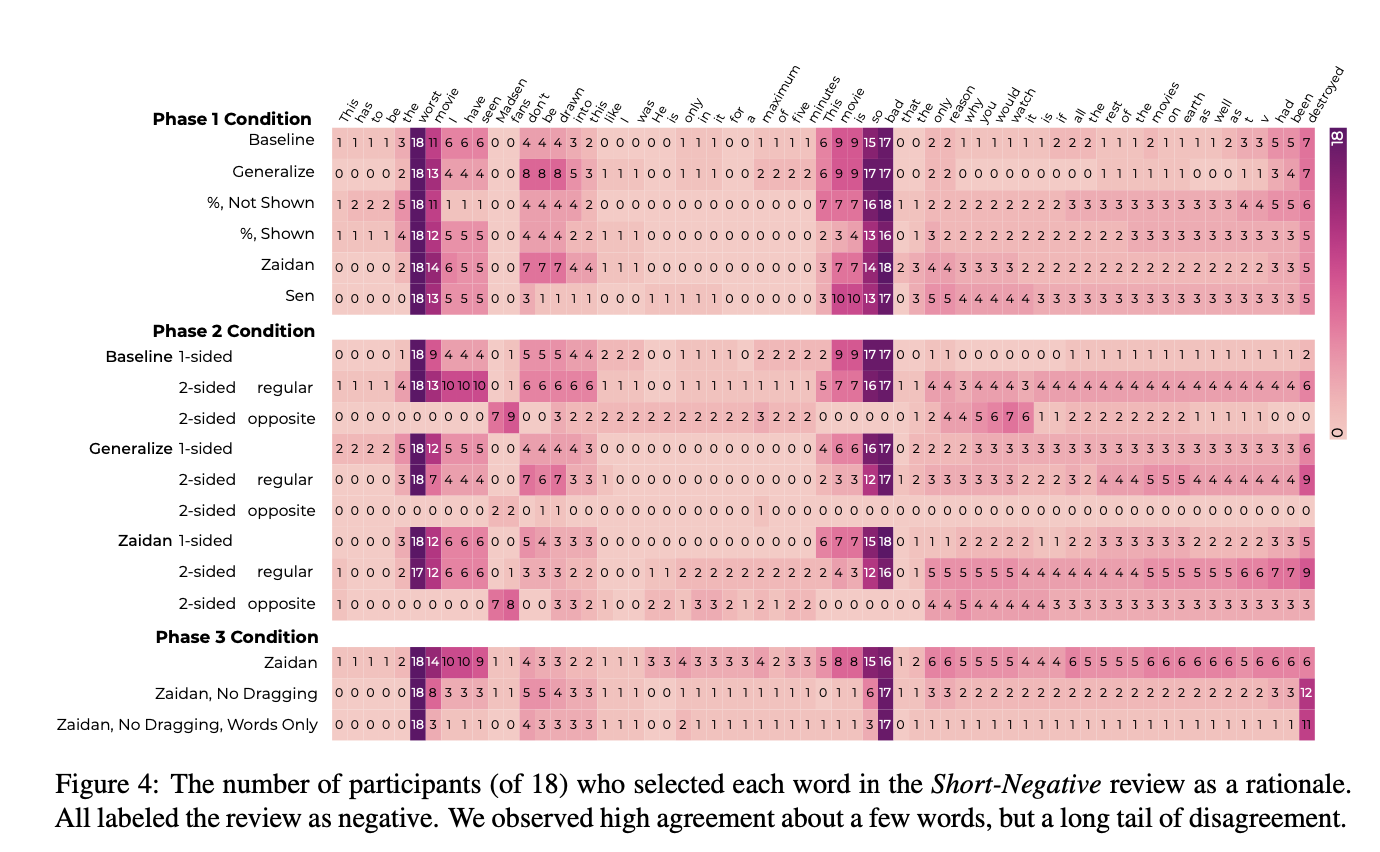

In the context of data labeling, NLP researchers are increasingly interested in having humans select rationales, a subset of input tokens relevant to the chosen label. We conducted a 332-participant online user study to understand how humans select rationales, especially how different instructions and user interface affordances impact the rationales chosen. Participants labeled ten movie reviews as positive or negative, selecting words and phrases supporting their label as rationales. We varied the instructions given, the rationale-selection task, and the user interface. Partic- ipants often selected about 12% of input tokens as rationales, but selected fewer if unable to drag over multiple tokens at once. Whereas participants were near unanimous in their data labels, they were far less consistent in their rationales. The user interface affordances and task greatly impacted the types of rationales chosen. We also observed large variance across participants.

[PDF]

@inproceedings{sullivan+etal:22,

author = {Jamar L. Sullivan and Will Brackenbury and Andrew McNutt and Kevin Bryson and Kwam Byll and Yuxin Chen and Michael Littman and Chenhao Tan and Blase Ur},

title = {Explaining Why: How Instructions and User Interfaces Impact Annotator Rationales When Labeling Text Data},

year = {2022},

booktitle = {Proceedings of NAACL}

}